Neural style transfer, to be able to produce images combining the content of one image with the style of another, emerged roughly one year ago. It all started with a paper by Gatys et al describing a method of using a convolutional neural network augmented by Gram matrices, and very soon there were software implementations available at github. Probably the most successful has been neural-style, which soon gathered a large user base and also served as a basis for further development using modified or more advanced approaches.

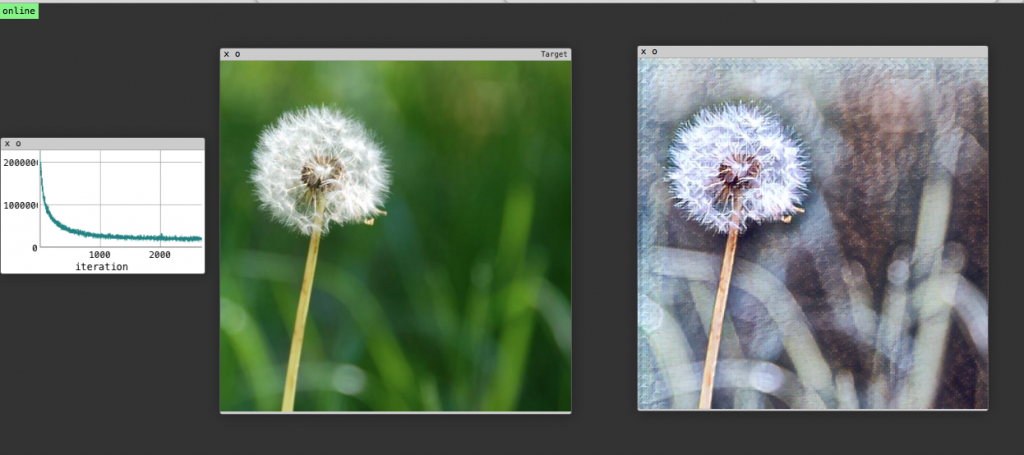

Neural-style by Justin Johnson is robust, versatile and relatively easy to install once one is familiar with the linux command line and installation of typical github applications. It works reasonably well with different types of images and styles, and although the available parameters may seem bewildering for a newcomer, they actually work quite well once one gets used to them. The main issues for users have been the speed and the scalability. Because neural-style works by iteration, it takes time to produce an image, although using a GPU helps a lot. Personally, speed has not been a problem although until recently, I used the program without a GPU. I made neural-style to output intermediate results at each 50 iterations, to monitor how the image is emerging and in order to learn from this.

Scalability has been a more serious issue. The size of the images one can make is limited by the memory, and most people have at maximum 8GB RAM in their GPU. One approach to the problem would be to use GPU for finding the correct settings and then using CPU with 32GB or more to produce an image with a higher resolution. Unfortunately, the style transfer itself does not scale, the look of the resulting image changes significantly when the image size is doubled. No solution to this has been found by changing the parameter settings. It might be that due to the size of images used in training the neural model, it responds differently to features when the scale is changed. So far, the most promising option to the scaling issue is to use super-resolution software, especially such that uses a convolutional neural network to upscale an image.

The emergence of services and apps like deepart.io, pikazo and prisma have kind of set a standard what users expect of neural-style. A standard question is “how can I make neural-style to look like X?”. One may wonder whether one wants his pictures look like everyone’s else’s, but of course trying to solve this is a good learning exercise for a newcomer. Furthermore, this kind of test can function as a benchmark for neural style transfer applications.

The services and apps also add importance to speed and memory footprint, especially for those intending to develop and maintain such services. Recently, new approaches and implementations have appeared, based on the idea of using a feedforward neural network which can directly output an image. Remember, neural-style only takes in an image, measures how close or far it is from the content and the stylistic goals, and iterates until a satisfactory result is reached. But a neural model can also be constructed to directly output an image, which makes it possible, with proper training, to feed in an image and, in one go, get an output image from the other end.

Texture_nets by Dmitry Ulyanov does exactly that. First one has to train the model to respond to a style. For this one takes one style image and a set of images to be used in training. There is a very nice browser-based user interface to monitor the training process.

When the training is done, usually after an hour or two on a GPU, the style transfer is really fast. The first time I believed that the program had terminated without doing anything, until I noticed that an image had indeed appeared in the folder.

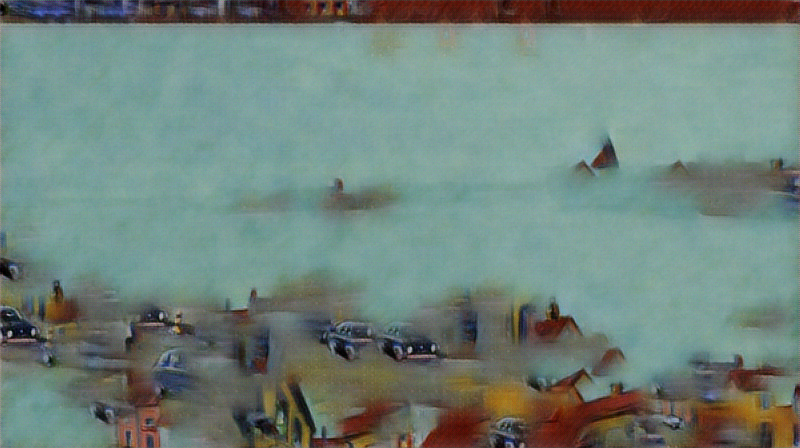

Training of the styles is somewhat tricky, though. Having experience with neural-style is not necessarily of much help. So far I find that the range of styles I can create is curiously limited. Regardless of the style images I use, there is some characteristic feel to the results that is difficult to define. Also, the style of the results can be great but it most often is different from what you expected, from what you see in the style image. Partly, this may be due to scale issues. Different from neural-style, texture_nets does not appear to scale the materials, instead it crops them during the training. Therefore the proper scaling of the training and style images beforehand is probably crucial.

So, texture_nets is definitely a great tool and it offers an interesting challenge. You can get great results, but you cannot expect it to work just like neural-style out of the box.

Although I have here used texture_nets as an example of a feedforward solution, it was not the first one to use this approach. The author of neural-style, Justin Johnson, presented a paper on the approach in March 2016. He has not published his source code, but at least one implementation of this approach has been published in github, chainer-fast-neuralstyle.

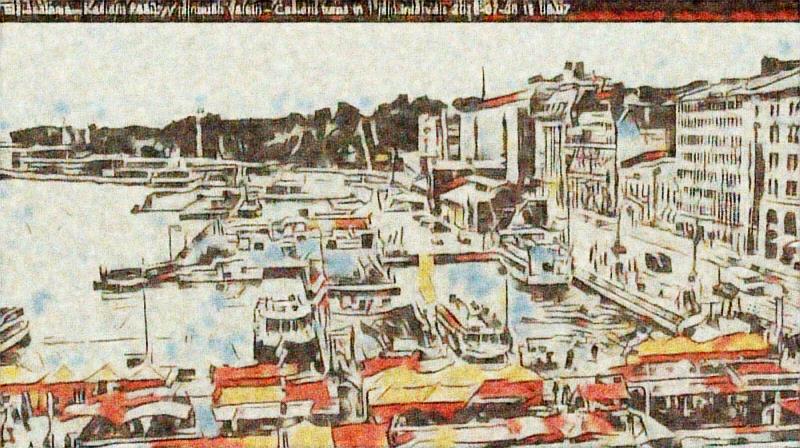

Neural-doodle by Alex J. Champandard uses a different approach. Instead of using Gram matrices for style transfer, it makes use of MRF (Markov random fields) and breaks the style into small patches, which are then used in assembling the final image. This approach is very different from neural-style, and requires much care in setting the parameters. Also, whereas the statistical approach provided by Gram matrices takes care of the overall appearance of the image, the patches approach also retains something of the spatial structure of the style image, on the one hand, and links semantic structures of the content and style images, on the other. It can, for instance, replace eyes with stylizised eyes from the style image. But very easily one can get results which look like mashups of clippings from the style image, like in this image.

The basic neural-doodle is not a feedforward solution, but there is such a version in the forward branch of the project in github. It is somewhat tricky to install, but once you get it working it is remarkably fast even on a CPU (most images made in 15 to 45 seconds). It uses a feedforward network but operates a few iterations. I have not delved into the details of the code in this project, but I guess the iterations are related to getting the style patches right.

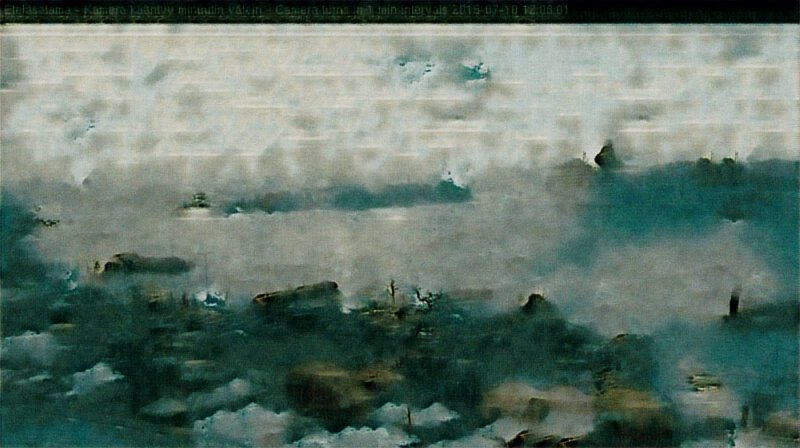

Nevertheless, despite its quirks, neural-doodle/forward is a formidable tool. I have had it running for days, converting a webcam view using random settings and style images. In addition to being fast, neural-doodle/forward has a slices parameter that apparently helps to create larger images with limited memory. I have not thoroughly tested this feature, but it helped in a few cases when I was running out of memory. I have not experimented either with how well the style transfer works with different image sizes.

Which one of these three applications is best? For me, the question is not really meaningful. These three packages are all valuable tools while being all different. It is not easy to replace one with another and get a similar result. Frankly, I suspect that in many cases it is not even possible. Furthermore, there are many more implementations and approaches available, with new research being published every day. Things we did not even believe possible are already happening.

Thank you for the well summary of current neural style github application, looking forward to making this technology into consumer app in the future.